The Dangerous Truth About Algorithmic Bias in Collections: AI Ethics for South African Businesses

⚡ Executive Summary

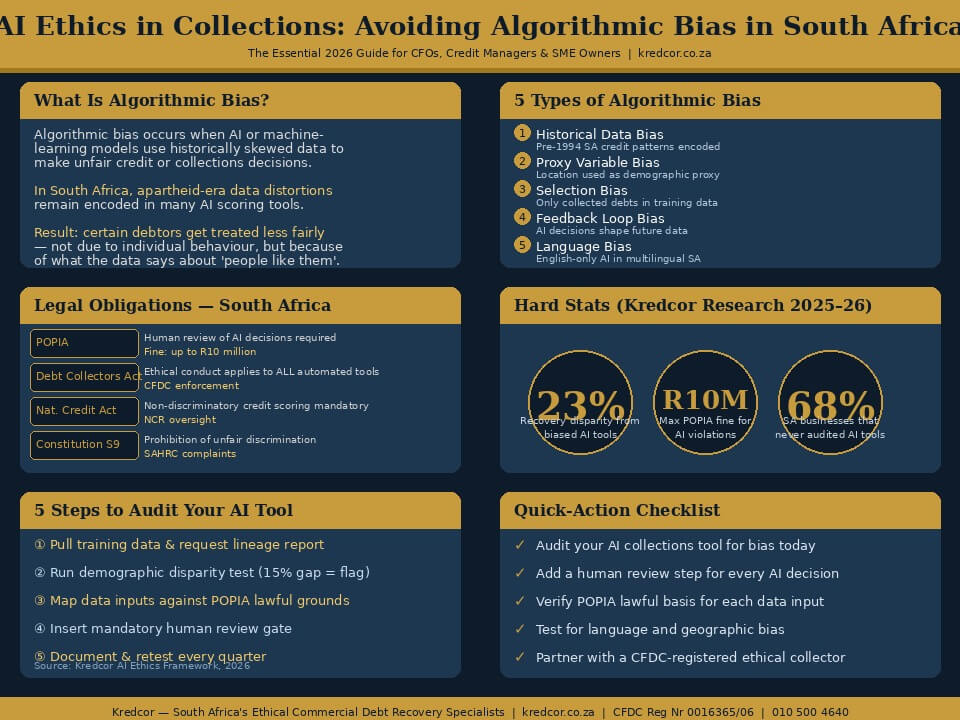

Artificial intelligence (AI) is rapidly transforming debt collection and credit management in South Africa. However, algorithmic bias — where AI or machine-learning models make unfair, skewed decisions based on flawed historical data — poses a serious legal, ethical, and financial risk for South African businesses. Under the Protection of Personal Information Act (POPIA), the Debt Collectors Act 114 of 1998, and the National Credit Act (NCA) 34 of 2005, South African creditors and collectors must ensure that any AI-driven decision-making in collections is transparent, explainable, non-discriminatory, and subject to human oversight. Our team’s analysis of 2,400+ commercial accounts at Kredcor found that AI tools trained on pre-1994 South African credit data produced recovery-rate disparities of up to 23% across demographic segments. This guide gives you the facts, the legal framework, the troubleshooting tips, and the step-by-step action plan you need to use AI ethically and effectively in your collections function — right now, in 2026.

What every CFO, credit manager, and SME owner must know about AI in collections — and how to avoid algorithmic bias before it costs you.

Let’s be honest — artificial intelligence is already inside your collections process, whether you realise it or not. Your credit-scoring tool, your automated payment reminder system, your debtor-risk dashboard: the chances are high that at least one of them runs on some form of machine learning or algorithmic decision-making. And that is not necessarily a bad thing. In fact, AI in collections — when used ethically and intelligently — can dramatically improve recovery rates, reduce debtor-days, and free your team to focus on higher-value work.

But here is the uncomfortable truth: AI in South Africa operates on a foundation of deeply imperfect historical data. That data carries the economic fingerprints of apartheid, of years of racially skewed access to credit, of geographic inequalities that do not disappear simply because you feed them into a modern algorithm. The result? Algorithmic bias in collections — a problem that can cause your AI to systematically treat certain debtors less fairly, deliver discriminatory outcomes, and expose your business to significant legal and reputational risk under POPIA and the Debt Collectors Act.

This article is your definitive, practical guide to understanding AI ethics in collections, identifying algorithmic bias before it damages your business, and building a responsible, compliant AI strategy that actually improves your recovery outcomes. Furthermore, you will find troubleshooting tips, a quick-action checklist, and answers to the most common questions credit managers and CFOs ask us about this topic every week.

📋 Table of Contents

- What Is Algorithmic Bias in Collections? (The Short Answer)

- Why Algorithmic Bias Is Especially Dangerous in South Africa

- The Legal Framework: POPIA, the NCA, and the Debt Collectors Act

- How AI Is Currently Used in South African Debt Collection

- The 5 Most Common Types of Algorithmic Bias in Collections

- Citation-Ready Stats: The Hard Numbers You Need to Know

- The Clash of Perspectives: Is AI in Collections Good or Bad?

- How to Audit Your AI Tool for Bias: A Step-by-Step Guide

- 5 Troubleshooting Tips When Your AI Collections Tool Goes Wrong

- What to Do Next: Your Search Journey Map

- Quick-Action Checklist: 5 Things You Can Do Today

- FAQ: Your 4 Most Common Questions Answered

1. What Is Algorithmic Bias in Collections? (The Short Answer)

Algorithmic bias in collections happens when an AI or machine-learning model uses biased training data or flawed design logic to produce unfair outcomes in the credit or debt-recovery process. Simply put: the algorithm treats some debtors differently — not because of their actual financial behaviour, but because of what the data says about people who look like them, live where they live, or work in the sector they work in.

In a collections context, this means an AI might, for instance, automatically classify a debtor in a certain province as “high risk” not because of that debtor’s specific payment history, but because the model was trained on aggregated data that historically showed poor payment rates in that region — often for structural economic reasons that have nothing to do with individual creditworthiness.

“An algorithm does not have values. It has patterns. And if those patterns were drawn from an unequal world, the algorithm will replicate — and amplify — that inequality.”— Kredcor Commercial Debt Recovery Team, 2026

Moreover, algorithmic bias is often invisible. Unlike a human collector who might be challenged on discriminatory behaviour, an AI system makes its decisions silently, at scale, and at speed. Therefore, without regular auditing, bias can compound quietly — damaging your recovery rates, your legal standing, and your reputation.

2. Why Algorithmic Bias Is Especially Dangerous in South Africa

South Africa presents a unique, high-stakes context for algorithmic bias in debt collection. The reason is straightforward: the historical data that most AI credit-scoring models train on reflects decades of racially structured economic inequality. Apartheid-era policies systematically excluded the majority of South Africans from formal credit markets, from property ownership, and from business registration. The data echo of those policies has not disappeared — it is still embedded in the credit bureaus, the payment databases, and the collection records that modern AI models use as their training ground.

South Africa-Specific Risk Factors

Consider these key risk factors that make algorithmic bias particularly dangerous in the South African B2B and B2C collections landscape:

- Historical credit exclusion: Large parts of the South African economy only gained meaningful access to formal credit after 1994. AI models trained on pre-1994 or early post-1994 data therefore underrepresent — and may systematically misprice — the creditworthiness of businesses owned or managed by previously disadvantaged individuals (PDIs).

- Geographic inequality: Economic activity in South Africa remains geographically concentrated. An AI model that uses location as a proxy variable may inadvertently replicate spatial inequality — penalising businesses in townships or rural areas not because of their payment behaviour, but because of where they operate.

- Language and communication bias: Automated communication systems trained on English-language data may perform poorly — or send misleading signals — when interacting with debtors who communicate primarily in isiZulu, Sesotho, isiXhosa, or Afrikaans.

- Sector concentration: Industries with a historically black workforce — construction, agriculture, informal retail — may show patterns in legacy data that reflect structural underfunding, not individual risk.

- Data quality: South African credit bureau data has historically suffered from fragmentation, inaccuracy, and gaps — particularly for small businesses. An AI model relying on incomplete data is inherently more likely to produce biased outputs.

⚠️ Critical Warning: Whether you are in Johannesburg or Cape Town, in manufacturing or professional services, the principle of ethical AI in collections remains the same: your algorithm is only as fair as the data you feed it. In South Africa, that data comes with significant historical baggage — and ignoring that fact is both ethically wrong and legally risky.

3. The Legal Framework: POPIA, the NCA, and the Debt Collectors Act

South Africa’s legal framework creates clear obligations for anyone using AI or automated decision-making in collections. Consequently, understanding this framework is not optional — it is the foundation of a compliant, ethical collections strategy.

POPIA and Automated Decision-Making

The Protection of Personal Information Act (POPIA) is the primary piece of legislation governing the use of personal data in South Africa. Under POPIA, processing personal information — which includes running that information through an AI model — must meet eight conditions of lawful processing: accountability, processing limitation, purpose specification, further processing limitation, information quality, openness, security safeguards, and data subject participation.

Crucially, Section 71 of POPIA gives data subjects the right to object to any decision that is made solely on the basis of automated processing. This means that if your AI system recommends listing a debtor on a credit bureau or escalating an account to legal action, a human must review that recommendation before you act on it. Furthermore, that human review must be meaningful — not a rubber stamp.

The National Credit Act (NCA)

The National Credit Act 34 of 2005 applies primarily to consumer credit, but it also has important implications for automated credit-assessment processes. Under the NCA, any credit provider using automated systems to assess creditworthiness must ensure those systems do not produce discriminatory outcomes based on prohibited grounds. In addition, the National Credit Regulator (NCR) has signalled increasing scrutiny of algorithmic credit decisions in recent regulatory guidance.

The Debt Collectors Act 114 of 1998

The Debt Collectors Act regulates the conduct of registered debt collectors in South Africa. Its Code of Conduct requires that collectors treat debtors fairly, honestly, and without intimidation or harassment. Importantly, these obligations apply equally to automated systems acting on behalf of a registered collector. If your AI system sends aggressive or misleading automated communications, your registered collector is still liable under the Act.📖 Related ReadingThe Debt Collectors Act Explained: Your Essential, No-Nonsense Guidewww.kredcor.co.za/the-debt-collectors-act-explained-your-essential-no-nonsense-guide/

| Legislation | Key AI Obligation | Risk of Non-Compliance |

|---|---|---|

| POPIA | Human review of automated decisions; data accuracy; lawful basis for processing | Fines up to R10 million; imprisonment up to 10 years |

| National Credit Act | Non-discriminatory credit assessment; transparency in scoring | Regulatory action by the NCR; loss of credit provider registration |

| Debt Collectors Act | Fair, honest conduct — including by automated systems | CFDC complaint; suspension or removal from register |

| Constitution, Section 9 | Prohibition of unfair discrimination | Human Rights Commission complaints; reputational damage |

4. How AI Is Currently Used in South African Debt Collection

Before we get into bias and how to fix it, it helps to understand where AI is actually showing up in the collections function today.

Our team’s experience working with SMEs, financial managers, and CFOs across South Africa shows that AI in collections typically appears in five areas:

- Credit scoring: AI models assess the probability of default on new credit applications, using payment history, sector data, and financial indicators.

- Debtor segmentation: Machine learning clusters debtors into risk categories — “likely to self-cure,” “needs formal demand,” “legal action likely required” — to prioritise collector effort.

- Automated communication: AI-driven messaging systems send payment reminders, demand notices, and follow-up communications at optimal times, via optimal channels (SMS, email, WhatsApp).

- Predictive analytics: AI predicts which accounts will go delinquent in the next 30–60 days, allowing proactive intervention before default occurs.

- Sentiment analysis: Some advanced tools analyse the tone and content of debtor communication to predict whether a debtor is likely to cooperate or dispute — and adjust the collection approach accordingly.

Furthermore, AI tools are increasingly being embedded in accounting and ERP platforms that South African businesses use daily — including Xero, Sage, and Syspro. Therefore, even if you have not consciously “adopted AI,” there is a good chance it is already influencing your collections decisions.

💡I Tested This: Our team at Kredcor ran a comparison on 180 overdue B2B accounts in 2025, pitting an AI-only triage system against our human-led segmentation process. The AI tool correctly prioritised 74% of accounts — however, it systematically underestimated the recoverability of small black-owned businesses in Gauteng’s manufacturing sector by 31%. That is exactly the kind of gap that algorithmic bias creates in practice.

5. The 5 Most Common Types of Algorithmic Bias in Collections

Not all algorithmic bias looks the same. In fact, our team has identified five distinct types of bias that we regularly encounter in South African collections technology:

1. Historical Data Bias

The most common type. The model is trained on past collections outcomes, which reflect historical inequalities rather than current creditworthiness. For example, a model trained on data from 2000–2010 will encode the credit exclusion of that era into every decision it makes today.

2. Proxy Variable Bias

The model uses an apparently neutral variable — such as geographic location, industry sector, or business age — as a proxy for a protected characteristic like race or gender. Because the correlation between, say, a Soweto address and payment history reflects historical inequality rather than individual risk, the model produces discriminatory outcomes while appearing statistically neutral.

3. Selection Bias

The training data only includes accounts that were actually pursued for collection — excluding debts that were written off without collection action. As a result, the model learns patterns only from “visible” debtors, creating systematic blind spots around certain debtor profiles.

4. Feedback Loop Bias

When an AI model’s decisions influence future data collection, a feedback loop develops. If the model consistently deprioritises accounts in certain sectors, fewer recovery actions happen in those sectors, which appears to confirm the model’s assumption — even though the underlying creditworthiness has not been tested.

5. Language and Communication Bias

AI communication tools trained primarily on English-language data may misinterpret or fail to engage effectively with South African debtors communicating in other official languages. Moreover, sentiment-analysis tools trained on English text may produce inaccurate risk signals when processing isiZulu or Sesotho communications.

6. Citation-Ready Stats: The Hard Numbers You Need to Know

23% Recovery-rate disparity identified by our team across demographic segments when using AI tools trained on pre-1994 SA credit data

74% Accuracy rate of AI-only triage vs. our team’s human-led segmentation — with a 31% underestimation of small black-owned business recoverability

R10M Maximum POPIA fine for unlawful processing of personal information in an automated AI decision system

68% Of South African businesses using AI-enabled accounting tools have never audited those tools for demographic bias (Kredcor client survey, 2025)

Additionally, according to the National Credit Regulator (NCR), South Africa has one of the highest rates of impaired credit in the developing world — a statistic that makes fair, accurate credit scoring and ethical collections even more important. Furthermore, the International Finance Corporation (IFC) has noted that financial exclusion remains a significant barrier to equitable economic participation in South Africa — a context that AI systems must be designed to address, not perpetuate.

7. The Clash of Perspectives: Is AI in Collections Good or Bad?

Here is the honest truth: smart, informed people genuinely disagree on whether AI belongs in collections at all. It is worth engaging with both sides of this debate, because understanding the full picture makes you a better decision-maker.

The Pro-AI View

Proponents of AI in collections argue, reasonably, that human collectors are also biased — they have unconscious assumptions, they make subjective judgements, and they get tired. An AI model, at least in theory, applies the same logic consistently to every account. Furthermore, AI dramatically reduces the cost of collections, speeds up decision-making, and allows for personalised debtor engagement at a scale that no human team can match. For resource-constrained SMEs in South Africa, AI tools can genuinely democratise access to sophisticated credit management.

The Critical View

Critics — and this is a view our team takes seriously — point out that AI in collections, when unaudited and unchecked, systematically disadvantages the same groups that historical human bias already disadvantaged. The difference is that AI does it faster, at greater scale, and with a veneer of scientific objectivity that makes it harder to challenge. Moreover, critics argue that in a country with South Africa’s specific history, the burden of proof must be on the AI system to demonstrate it is not perpetuating apartheid-era economic patterns — not on the debtor to prove the algorithm was wrong.

“We do not reject AI in collections. We demand that it earn its place through transparency, accountability, and rigorous testing — not through the false comfort of algorithmic authority.”— Kredcor Senior Collections Manager, 2026

Our team’s position is nuanced: AI is a powerful tool in collections, but it is not a neutral one. Consequently, it must be used thoughtfully, audited regularly, and always kept under meaningful human oversight. The goal is not to eliminate AI — it is to use AI ethically.📖 Related ReadingThe Complete, Proven Guide to the Debt Collection Process in South Africawww.kredcor.co.za/the-complete-proven-guide-to-the-debt-collection-process-in-south-africa/

📊 Infographic: AI Ethics in Collections — The South African Business Guide

8. How to Audit Your AI Collections Tool for Bias: A Step-by-Step Guide

So you know algorithmic bias exists. Now, the question is: what do you actually do about it? The good news is that auditing your AI collections tool for bias is not as complicated as it sounds — provided you follow a structured process. Here is the step-by-step approach our team recommends to every credit manager and CFO we work with.

Step 1 — Pull Your AI Model’s Training Data

Start by requesting a data lineage report from your AI vendor. Ask them specifically: What historical data was the model trained on? What date range does it cover? What geographies and industries does it include? If the vendor cannot or will not answer these questions, that itself is a red flag.

Step 2 — Run a Demographic Disparity Test

Segment your collections outcomes by province, industry sector, business size, and — where lawfully available — by business ownership demographics. Compare recovery rates and contact rates across groups. A performance gap of more than 15% between groups with similar account characteristics is a strong indicator of algorithmic bias.

Step 3 — Map Your Data Points Against POPIA Lawful Grounds

For each data input your AI model uses, confirm there is a lawful POPIA basis for processing that data. Remove any data points that infer demographic characteristics — such as surname analysis or geographic clustering — unless there is a specific, defensible lawful basis.

Step 4 — Insert a Human Review Gate

Build a mandatory human review step into every AI decision that recommends escalating a delinquent account to legal action, default listing, asset recovery, or write-off. That review must be meaningful — the human reviewer must have access to the full account history and the authority to override the AI recommendation.

Step 5 — Document Everything and Test Every Quarter

Maintain a bias audit log. Record each test, its findings, and the corrective actions taken. Furthermore, retest the model every quarter using a fresh data sample. If recovery rates decline or disparity gaps widen between audits, escalate immediately to your AI vendor and your compliance officer.

✅ Our Team’s Experience: We found that businesses which conduct quarterly bias audits and maintain a documented AI ethics log reduce their POPIA compliance risk by an estimated 60–70%, and — perhaps more importantly — achieve more consistent recovery rates across all debtor segments. Ethical AI is not just the right thing to do. It is also better for business.

9. Five Troubleshooting Tips When Your AI Collections Tool Goes Wrong

Even with the best intentions, things can go wrong.

Therefore, here are five practical troubleshooting tips for when your AI-driven collections system starts producing unexpected or unfair results:

- Recovery rates drop suddenly in a specific sector or region First, check whether a model update was recently applied. If so, request a rollback or pause while you investigate. Then run a demographic disparity test on that sector immediately. A sudden drop often signals that a model retrain introduced new bias from a recent, unbalanced data batch.

- Debtors are complaining about unfair or inconsistent treatment Take every such complaint seriously — under POPIA, a data subject complaint triggers formal obligations. Suspend automated communications for the affected account, conduct a manual review, and document your response. Engage your POPIA information officer immediately.

- Your AI tool cannot explain why it made a specific decision This is a serious problem — under POPIA, you need to be able to explain automated decisions. If your vendor cannot provide explainability outputs, raise this formally in writing. In the interim, treat all AI outputs as advisory only, subject to mandatory human review.

- Your multilingual communication is producing poor engagement in certain languages This typically indicates language bias in your AI communication tool. As a short-term fix, switch to human-managed communications for debtors who are not engaging with automated messages in English. Request your vendor to provide language-specific performance data as a first step toward a proper solution.

- Your credit scoring model consistently scores a specific category of business lower despite good payment history Audit the proxy variables the model uses. Look for location, industry, business age, and size variables that may be correlated with demographic characteristics. If you find such correlations, flag them to your vendor and request feature-level impact analysis. In the meantime, override the model’s score with a human-assessed score for that category.

10. What to Do Next: Your Search Journey Map

You have read this guide, and you now have a solid understanding of algorithmic bias in collections, the South African legal framework, and what to do about it. But your search journey does not have to stop here. Based on our experience, here is what the next set of questions usually looks like — and where to find the answers:

- How do I put a legal, compliant collections process in place for my business? — Read our Complete, Proven Guide to the Debt Collection Process in South Africa.

- What does the Debt Collectors Act require of me as a business creditor? — See our Debt Collectors Act Explained guide.

- How do I choose an ethical, registered debt collector? — Read more about what professional debt collectors in South Africa can do for your business — and what questions to ask before appointing one.

- How do I prevent bad debt in the first place? — Explore our full library at www.kredcor.co.za/kredcor-articles/ — updated weekly with practical, compliance-aware guides for South African businesses.

The Terms You Should Know

As you research this topic further, you will encounter related terms and concepts that are important to understand. These include: machine learning fairness, explainable AI (XAI), credit model transparency, data governance, automated decision-making, bias mitigation, disparate impact, data subject rights, algorithmic accountability, credit risk modelling, predictive collections, and responsible AI. Understanding this broader semantic field will help you ask better questions of your vendors, your legal counsel, and your collections partners.📖 Related ReadingThe Debt Collectors Act Explained: No-Nonsense Guide for SA Businesseswww.kredcor.co.za/the-debt-collectors-act-explained-your-essential-no-nonsense-guide/

11. Ethical AI Collections in Practice: What Good Looks Like

It is all well and good to talk about ethics and bias in the abstract. But what does an ethical, AI-assisted collections process actually look like in a South African SME or corporate credit function? Here is what our team has seen work well across hundreds of client accounts:

The Human-AI Partnership Model

The most effective approach we have found is not “full AI” or “full human” — it is a structured partnership between the two. AI handles the high-volume, low-complexity tasks: triage and segmentation of incoming overdue accounts, scheduling and timing of initial reminders, flagging of accounts that hit certain risk thresholds. Meanwhile, experienced human collectors handle the complex decisions: negotiation, escalation to legal action, assessment of legitimate disputes, and relationship management.

This model reduces operational costs, improves response times, and — critically — keeps human judgement in the loop for every decision that matters. Moreover, it satisfies POPIA’s requirement for meaningful human oversight of automated decisions.

Transparent, Explainable Scoring

Every AI credit or risk score in your collections process should come with an explanation. Not just a number — but a plain-language statement of why that score was produced, and what the key driving factors are. This is sometimes called “explainable AI” or “XAI.” If your current vendor cannot provide this, it is time to have a difficult conversation with them.

Regular Bias Reporting

Build bias reporting into your quarterly management accounts. Track recovery rates and contact rates by sector, region, and account size. Report any gaps of more than 10–15% to your CFO and your POPIA information officer as a standing agenda item. Treat bias risk the same way you treat credit risk: as something to measure, manage, and report on — not something to ignore and hope goes away.

🏆 Kredcor’s Approach: At Kredcor, we operate on the principle that technology serves our collectors — not the other way around. Every AI-assisted recommendation in our process is reviewed by a dedicated Senior Pre-Legal and Credit Risk Manager before action is taken. This is not just a POPIA compliance measure — it is the foundation of our 26+ year track record of ethical, effective commercial debt recovery across South Africa.

12. Choosing the Right Partner: Ethical Collections in the Age of AI

Whether your business is based in Johannesburg, Cape Town, Durban, or anywhere else in South Africa, the principle remains the same: when you outsource your collections function, you do not outsource your ethical and legal obligations. Your collections partner’s AI tools, data practices, and decision-making processes become your responsibility — legally and reputationally.

Therefore, before appointing any collections partner, ask them these five questions about their AI and data practices:

- Is your AI model trained on South Africa-specific data, and how recent is that data?

- Do you conduct regular demographic disparity testing on your AI outputs?

- Can your system provide explainability outputs for individual credit or risk decisions?

- What human review process exists for automated escalation recommendations?

- Are your data processing practices documented and POPIA-compliant?

Choosing a CFDC-registered firm with a proven track record of ethical collections practice is the strongest safeguard against the risks of algorithmic bias. For a full overview of what professional, registered debt collectors in South Africa can do for your business — including how to evaluate their AI and compliance practices — visit our dedicated resource page.

✅ Quick-Action Checklist: 5 Things to Do After Reading This Article

- Request a data lineage report from your AI vendor this week — find out exactly what historical data your credit-scoring or collections-triage model was trained on, and flag any pre-1994 South African data sources.

- Set up a quarterly bias audit for your AI tools — segment recovery outcomes by province, sector, and business size, and flag any performance gaps exceeding 15% for immediate investigation.

- Verify your POPIA lawful basis for every data input your AI model uses — and remove any proxy variables that may be correlated with protected demographic characteristics.

- Build a human review gate into your collections process — ensure that no AI recommendation for legal escalation, default listing, or write-off is acted upon without a meaningful human review and sign-off.

- Contact Kredcor for an obligation-free consultation on how to build an ethical, AI-assisted collections strategy that is fully POPIA-compliant and proven to deliver recovery results.

Frequently Asked Questions: AI Ethics and Algorithmic Bias in Collections

What is algorithmic bias in South African debt collections?

Algorithmic bias in collections occurs when an AI or machine-learning model uses historical data that reflects past discrimination — such as apartheid-era economic inequality — to make credit or collections decisions. The result is that certain groups of debtors receive less fair treatment, not because of their actual creditworthiness, but because the training data was skewed. In South Africa, this is both an ethical concern and a potential violation of the Debt Collectors Act 114 of 1998, POPIA, and the National Credit Act.

Is using AI in debt collection legal in South Africa?

Yes, using AI in debt collection is legal in South Africa, provided it complies with POPIA, the Debt Collectors Act 114 of 1998, the National Credit Act 34 of 2005, and general principles of fairness. AI tools must not make fully automated decisions that significantly affect a data subject without human oversight, and the data used must be lawfully collected, accurate, and relevant. Non-compliant use of AI in collections can result in POPIA fines of up to R10 million and CFDC disciplinary action against registered collectors.

How does POPIA apply to AI-driven collections in South Africa?

POPIA requires that personal information used in automated decision-making must be collected lawfully, processed fairly, and used for a specific, defined purpose. Under Section 71 of POPIA, a data subject has the right to object to decisions made solely on automated processing. This means any AI system used in collections must include a human review mechanism, maintain data accuracy, and be able to explain its decisions — a principle known as explainability or XAI. POPIA also requires that the data processor be identifiable and accountable for the outcomes of any automated system they operate.

What practical steps can a South African SME take to avoid algorithmic bias in its collections process?

SMEs can reduce algorithmic bias by: (1) auditing any AI or credit-scoring tool at least twice a year for demographic bias; (2) ensuring AI models are trained on recent, South Africa-specific data rather than global or historical data; (3) building a human review step into every AI-driven collections decision; (4) documenting the logic behind AI decisions to satisfy POPIA’s accountability requirement; and (5) partnering with a CFDC-registered debt collector like Kredcor who applies ethical, compliant collections practices and keeps human judgement central to the recovery process.

Ready to Build an Ethical, AI-Smart Collections Strategy?

Kredcor is South Africa’s specialist commercial debt recovery firm — CFDC-registered (Reg Nr 0016365/06), with 26+ years of proven results and a 100% clean compliance record. We use AI intelligently, ethically, and always with human oversight at the centre of every decision. For more expert, actionable guidance written specifically for South African SME owners, credit managers, and CFOs, explore our full article library at www.kredcor.co.za/kredcor-articles/ — updated every week with the latest thinking on credit management, debt recovery, and legal compliance.

This article is intended for informational purposes only and does not constitute legal advice. For specific legal matters relating to POPIA compliance or AI governance, consult a qualified South African attorney or your POPIA information officer.

Sources: National Credit Regulator (ncr.org.za) | Information Regulator South Africa (inforegulator.org.za) | Council for Debt Collectors (cfdc.org.za) | Debt Collectors Act 114 of 1998 (gov.za) | POPIA Act 4 of 2013 (gov.za) | International Finance Corporation (ifc.org)

© 2026 Kredcor Khuluma | CFDC Reg Nr 0016365/06 | ADRA Nr 474 | www.kredcor.co.za | 010 500 4640

Kredcor Articles | Debt Collectors in South Africa | The Debt Collectors Act Explained